Part 1: Why Agentic AI Demands a New Kind of Visibility

The AI Trust Problem No One Is Talking About

|

Your AI product is live. Latency looks fine. Uptime is green. And yet, somewhere in production, your AI is quietly giving customers wrong answers, generating outputs your legal team would not approve, and spending far more on inference than it should. You just can’t see it yet, and that’s exactly the problem |

Today, inference accounts for 85% of the enterprise AI budget, with most organizations having little visibility into what’s driving that spend. The companies that win won’t necessarily be those with the smartest models, but those with the most disciplined compute strategies (AnalyticsWeek, 2026). The scenario above is the core leadership challenge of the agentic AI era. The systems your organization is deploying today (e.g., LLM-powered assistants, multi-step reasoning agents, intelligent extraction pipelines) do not fail the way traditional software does. Instead, they degrade silently, drift unpredictably, and create risk that standard monitoring tools were never designed to catch. AI Observability & Evaluation (O&E) is the control plane that closes this gap by continuously showing you what your AI systems are doingin the real world and judging whether that behavior is acceptable on the basis of quality, safety, and cost.

“Observability” is the traces, logs, and signals that let you reconstruct what actually happened inside an AI workflow. “Evaluation” is the scorecards, including quality, safety, cost, and user experience, that tell you whether that behavior is good enough for your business and risk profile. Together, O&E form a feedback loop: observe behavior, evaluate it against your standards, and act on what you learn.

As an enterprise AI consultancy, we spend time helping organizations move AI from a promising pilot to production-grade reality. That means we work at the intersection of LLM observability, AI evaluation frameworks, agentic AI monitoring, and enterprise AI governance. Across engagements in regulated industries, we have seen firsthand what happens when AI observability tooling is introduced late in the deployment cycle versus built into the architecture from day one. The difference shows up in debugging cycles, compliance audits, inference costs, and ultimately, in how confidently leadership can stand behind their AI-driven decisions.

In the following sections, I will stay out of vendor noise and focus on what leaders actually need to run AI in production. First, I’ll unpack why traditional monitoring fails for agentic systems and where that exposes your organization to hidden risk. Then, I’ll break down O&E into three practical pillars: seeing what actually happens in complex AI workflows, measuring how well it happened against your quality and safety bar, and enforcing guardrails when behavior is not acceptable. Finally, I will connect these pillars to the platform decisions and governance conversations your organization will inevitably face as your AI program scales.

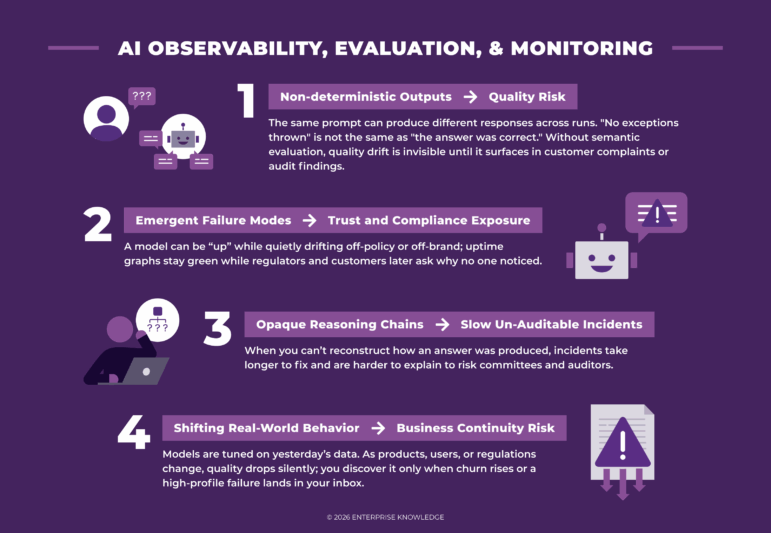

Why Traditional Monitoring Breaks Down for AI

Traditional software observability, such as metrics, logs, and traces, was built on the foundational assumption that the system’s logic is deterministic, encoded, auditable, and consistent. This means you can read the code and predict what it will do.

Agentic AI systems break that assumption at every layer.

Consider what happens when an AI agent processes a customer inquiry. It queries a knowledge base, reasons across multiple retrieved documents, generates a structured response, and routes it to a downstream system. Each step introduces unpredictability and potential failure that is invisible to conventional monitoring tools.

Investing in observability is not an engineering luxury. It is how AI programs earn and maintain organizational trust.

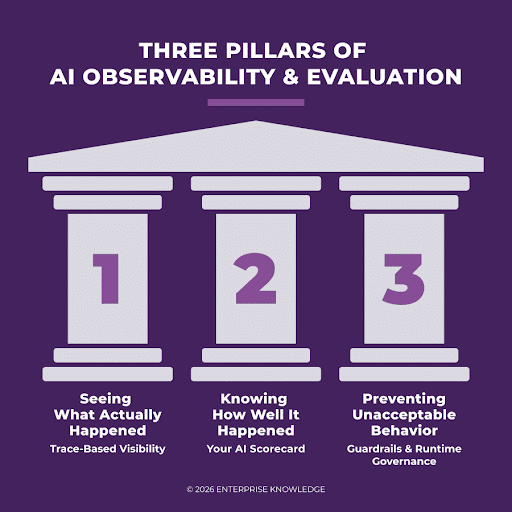

Three Pillars of AI Observability & Evaluation

There is no single approach that suits every AI workload. The right strategy depends on the complexity of the system, team maturity, and the risk profile of the use case. The most effective organizations build on three complementary pillars, often in combination. Trace-based visibility shows what happened, evaluation scorecards judge how well it happened, and guardrails turn those insights into real-time control over what is allowed to happen in the first place.

Pillar 1: Seeing What Actually Happened (Trace-Based Visibility)

Trace-based observability captures the full execution path of an AI workload as a connected sequence of operations: a model call, a retrieval step, a tool invocation, a downstream API request. Think of it as a flight recorder for your AI system.

What trace-based visibility surfaces for leaders:

- Which step in the agent pipeline caused a failure or delay

- Token usage and model call costs by workflow and user segment

- Error patterns and retry behavior that drive up latency and cost

- Complete interaction history for compliance and audit purposes

|

Tracing is how you move from “something went wrong” to “here is exactly what happened, when, and why” in minutes rather than days. |

Key trade-offs to manage: Instrumentation requires upfront engineering investment, particularly for complex agent orchestration frameworks. High-traffic systems generate large trace volumes, establishing data sampling and retention policies early to control storage costs. Crucially, traces capture raw inputs and outputs. Data masking or redaction should be implemented before traces reach shared storage to protect PII and proprietary content.

🏛️ Real-World Context: Financial Services Document Processing

A financial services team was losing days to intermittent pipeline failures that surfaced only as generic timeouts, with no way to isolate the root cause, across a multi-step document processing workflow with no audit trail for compliance.

They instrumented the full pipeline:

Ingestion → field extraction → validation → downstream system write, with span-level trace-based observability.

As a result, the team was able to pinpoint a specific document category triggering abnormal processing time and causing cascading latency downstream. The fix was deployed within hours, cutting a debugging cycle that previously took days with a documented audit trail the compliance team could reference.

Pillar 2: Knowing How Well It Happened (Your AI Scorecard)

While traces tell you what happened, metrics tell you how well it happened. Metric-based evaluation introduces quality signals that go beyond uptime. They ask whether the AI’s response was correct, complete, consistent, contextual, and compliant – the 5 C’s framework we pivoted on evaluating AI readiness.

Instead of a single accuracy number, leaders need a compact set of evaluation categories that show how AI behavior balances risk and business value. It asks the questions, is the AI correct and safe enough for our context, is it cost‑effective, and do users actually succeed with it?

The table below outlines a simple set of categories you can reuse across your organization’s AI use cases.

|

Category |

What it measures |

Why leaders care |

|

Quality |

Factuality, groundedness, relevance, hallucination rate |

Legal, compliance, customer trust exposure |

|

Safety |

Toxicity, policy adherence, PII exposure |

Regulatory risk and brand risk |

|

Cost & Efficiency |

Inference cost per task, latency |

Margin pressure and scalability |

|

User Experience |

Task completion, CSAT, re-use |

Revenue impact and adoption ROI |

Evaluation methods range from reference-based comparisons against curated “golden” datasets, to LLM‑as‑a-judge patterns where another model scores quality or policy alignment to structure human review by domain experts using rubrics. The strongest programs layer these. First, humans define and periodically refresh the golden set. Then, automated judges score large volumes. And finally, sampled production traffic is reviewed to catch new failure modes.

A word of caution for leaders: if you rely exclusively on automated evaluations you haven’t validated, you risk optimizing for a metric that diverges from real quality or safety. Before trusting automated scores in production, confirm on a sample that they agree with human judgment on your specific use cases and keep a small, evolving golden set plus dynamic samples from live traffic to re-check that alignment over time.

|

Evaluations are your AI program’s strategy function. They translate model behavior into business language and give you the data to ask and answer “Is this AI delivering on what we promised?” |

🏛️ Real‑World Context: Monitoring Credit Decision Quality

A retail bank deployed an LLM‑assisted workflow to draft credit decision rationales for underwriters. Uptime and latency were fine, but risk leaders could not see whether rationales were accurate or policy‑aligned.

The bank introduced an evaluation scorecard that tested factual alignment with source documents, inclusion of required risk factors, and adherence to underwriting language, and scoring a weekly sample. They discovered over a third of drafts omitted key risk factors. After refining prompts using low‑scoring examples, compliant rationales rose above 90% and review time per case dropped measurably, giving the risk committee evidence that the system strengthened control instead of eroding it.

Pillar 3: Preventing Unacceptable Behavior (Guardrails & Runtime Governance)

Observability and evaluation are retrospective, meaning they tell you what went wrong. For AI systems that operate in regulated industries or handle sensitive decisions, organizations need a proactive layer as well. A proactive layer would involve mechanisms that enforce policy before an output reaches a user or a downstream system.

This is not primarily an engineering decision. Boards, regulators, and risk committees increasingly expect documented evidence that AI outputs are governed. Guardrails are the operational enforcement layer of an AI governance policy — and the teams that build them thoughtfully create a competitive and compliance advantage.

Guardrails operate at two levels:

- Input guardrails intercept prompt injection attempts, sensitive data exposure (PII, credentials), or out-of-scope queries before they reach the model.

- Output guardrails screen generated responses for toxicity, policy violations, hallucination indicators, or off-topic content before delivery to users or downstream systems.

Critical governance considerations for leaders:

- Guardrail rules must be grounded in documented policies co-owned with legal, compliance, and business stakeholders — not defined unilaterally by engineering.

- Every guardrail trigger should be logged with full context (input, output, rule matched, timestamp, model version) to create auditable control points that satisfy internal risk review and external regulators.

- Thresholds should be calibrated against real production traffic before enabling automated blocking. Overly aggressive guardrails create alert fatigue and erode team trust in the system.

🏛️ Real-World Context: Guardrails Accelerating a Regulated Deployment

A pharmaceutical regulatory affairs team piloting an LLM-based clinical document summarization system implemented output guardrails as a prerequisite for FDA submission readiness sign-off. The guardrails flagged responses referencing unapproved drug indications, off-label use language, or patient-identifying information outside validated templates. During the pilot, 12% of outputs were intercepted and routed for medical-legal review. Preventing potential regulatory violations and generating a documented audit trail that satisfied the organization’s internal pharmacovigilance review board. Ultimately, production deployment was accelerated by six weeks.

Building Your AI Observability & Evaluation Framework

Understanding the three pillars is a necessary step, but not sufficient on its own. Up to this point, I have discussed the strategy – what it takes to see, evaluate, and control AI behavior. But strategy only creates value when it is operationalized, and operationalizing AI observability requires building a program.

Most organizations make the mistake of jumping straight to platform procurement. The result is tooling that fits the demo but not the production use case, or platforms that cannot scale to enterprise governance requirements. A durable approach is to treat O&E as an organizational capability, one that is designed deliberately, piloted rigorously, and sustained by the right operating model.

Step 1: Set Strategy with Interdisciplinary Stakeholders

AI observability is not a technology decision made by engineering alone. The first step is bringing together the right voices, including the business owners who define what “good” looks like, compliance and legal teams who define what “safe” looks like, and technology leaders who define what “feasible” looks like. Together, this group should align on:

- Which AI use cases carry the highest risk and therefore require the deepest observability?

- What evaluation standards apply (e.g., regulatory, contractual, or internal policy)?

- What governance thresholds trigger human review or escalation?

- How will observability data be reported to leadership and the board?

This alignment upfront enables O&E programs to measure what matters, rather than what is easy.

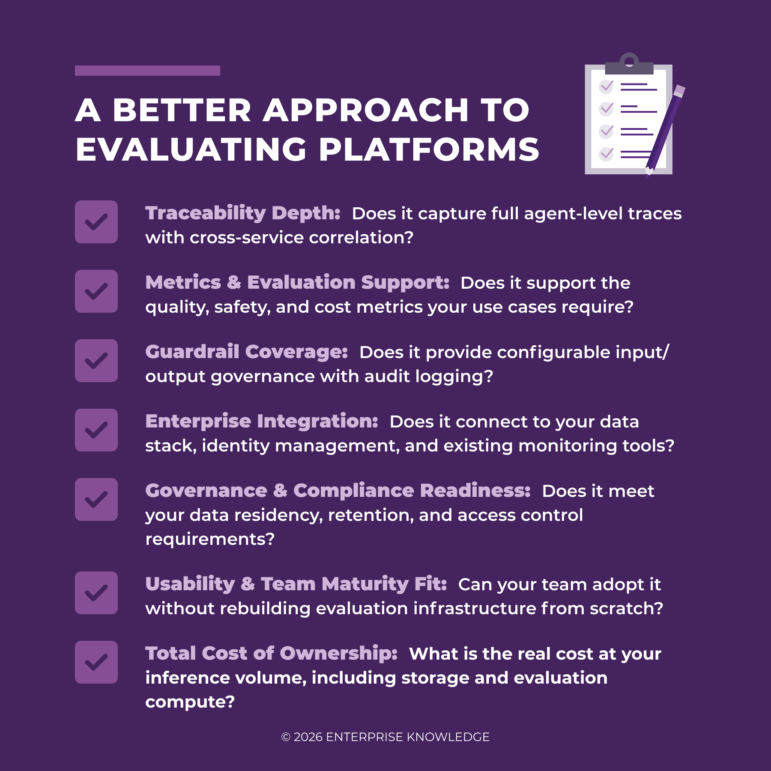

Step 2: Design Your O&E Architecture

Once strategy is set, the next step is designing an architecture that can implement the three pillars across your AI portfolio. This is not necessarily a single platform decision. Enterprise O&E programs often draw on a combination of components, including tracing infrastructure, evaluation frameworks, guardrail layers, and monitoring dashboards. The right question is not which tool should we buy, but which capabilities do we need and how should they connect.

Evaluate your architecture against these dimensions. The goal is an architecture that scales with your AI portfolio, not one that has to be rebuilt every time a new use case is introduced.

Step 3: Pilot End-to-End Implementation

Before scaling, run a structured pilot across a single AI use case that exercises all three pillars (tracing, metrics, and guardrails) end to end. A good pilot surfaces integration gaps, calibrates evaluation thresholds against real outputs, and builds internal confidence in the program before it is applied to higher-stakes deployments.

Step 4: Define the Operating Model That Sustains It

This is the step most organizations skip and where O&E programs most commonly stall. Technology alone does not make AI observable. It takes people with clear roles and processes that embed evaluation into the rhythm of how AI is developed and operated.

A sustainable O&E operating model typically requires the following components:

- An AI Governance Function: A cross-functional team or center of excellence that owns evaluation standards, reviews flagged outputs, and maintains the guardrail policy library.

- Defined Escalation Paths: Clear protocols for what happens when guardrails fire, metrics degrade, or traces reveal unexpected behavior.

- Evaluation Ownership by Use Case: Each AI product or deployment should have a named owner accountable for monitoring its quality and safety metrics.

- Regular Cadence Reviews: Scheduled reviews of O&E dashboards at the team, program, and executive level, with thresholds that trigger action not just reporting.

- Feedback Loops into Development: Observability findings should flow back into model fine-tuning, prompt revision, and guardrail updates, closing the loop between production behavior and system improvement.

The organizations that get the most value from AI observability are the ones that have made observability a shared organizational responsibility with the strategy, architecture, and operating model to back it up. In a follow-on article, we will discuss what we need to consider to build an enterprise O&E Framework.

Looking Ahead

Agentic AI fails in ways traditional monitoring cannot see, and unobserved AI carries very real costs in trust, compliance, and margin. This is why trace-based visibility, evaluation scorecards, and guardrails need to be treated as core infrastructure, not optional add‑ons. Observability and evaluation are not a one-time implementation exercise; they are an ongoing operational discipline and the mechanism by which AI programs earn organizational trust, respond decisively to production deviations, and scale responsibly.

Part 2 of this series moves from the what to the how at scale — a practical strategy for standing up an O&E program across your organization, including the maturity model we use to assess where teams are today and the structured platform evaluation methodology that removes opinion from the tooling decision.

If your team is navigating platform selection, benchmark design, or evaluation methodology for an agentic AI system, you should not be doing it in the dark. EK has helped organizations turn vague concerns about “AI risk” into concrete observability and evaluation programs. Contact our team to pressure‑test your current approach and design a control plane that fits your specific use cases and constraints.