Data and information security is often seen as a necessary evil that slows down the implementation of valuable new systems. We have all waited for security approval before launching a new system. However, AI is changing the narrative, and KM professionals must adjust. Security is no longer just a requirement to safeguard information assets; it is now an enabler of AI systems.

If you are implementing AI while still relying on application-specific security managed by groups or roles, you are in trouble. In our work using semantic layers to help organizations launch AI, we’ve seen a recurring theme: nearly every project is delayed because the client failed to consider a Unified Entitlements model at the start of their journey.

What Is Unified Entitlements?

Unified Entitlements provides a holistic definition of access rights, enabling consistent and correct privileges across every system and asset type in the organization. These information assets may include documents in SharePoint, wiki pages, discussion threads in Microsoft Teams or Slack, or datasets in a data lake. Because each of these systems traditionally relies on its own model for securing information, most organizations suffer from an inconsistent application of entitlement rules across their knowledge ecosystem.

Most security models are designed to prevent unauthorized access to data within specific systems. People are assigned to role-based groups, which limit authorization within each application. The problem with this approach is two-fold:

- AI Agents are not human users: They act as a proxy for one or more users.

- AI Agents are system-agnostic: They find data across all applications, not just one.

These siloed security models are not designed to protect data from Agents. An enterprise solution needs to understand the roles of the agents and the identity of the person making the request, while consistently applying security rules across all data sources.

Failing to implement Unified Entitlements can be catastrophic. The following are true stories of what happens when security is not considered up front:

- White-hat hackers took over the AI bot of an international consulting firm and downloaded millions of private records in less than two hours.

- A series of organizations accidentally left a portion of their AI stack open to the public, and HR policies, employee reviews, and proprietary software code were made public to Google.

- A standard messaging platform accidentally allowed the AI chatbot access to private channels, exposing customer and research information across the company.

Problems like these don’t just slow down AI initiatives. They cost companies millions of dollars and cost the people implementing these solutions their jobs.

Why Do Traditional Security Models Slow AI Initiatives?

Most of our clients begin their AI journey with one or two low-risk repositories. This is a smart approach, as it allows them to start quickly and learn. However, they soon discover the sheer volume of data AI tools can retrieve and aggregate across hundreds of thousands of documents. This access to information is exciting but very risky.

As the scale of easily accessible data becomes apparent, security becomes paramount. Valuable datasets such as client data, health information, and personal financial information must be properly secured before they can be added to the AI platform. In nearly every AI project we undertake, a momentum-killing pause occurs when our clients realize their information security models are insufficient to protect these critical assets. This delay not only stifles initial momentum, but also severely slows the realization of ROI from accessing the organization’s most important data sets.

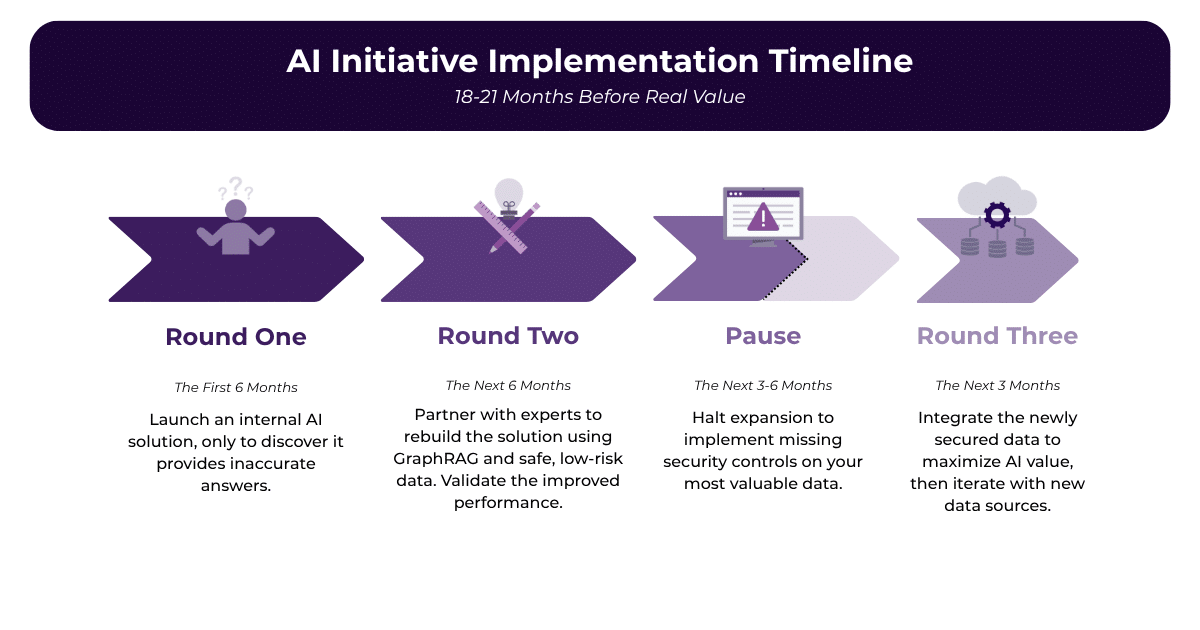

We have had several recent clients who follow this pattern:

AI is one of the most important initiatives in many organizations today. The timeline above is just short of two years to real value and includes several visible failure points that slow adoption and create mistrust among users.

Prioritizing Unified Entitlements: The Parallel Approach

We now recommend a different AI approach for our clients. The rollout of the first AI solution should begin simultaneously with the development of a Unified Entitlements framework. While the AI tool launches using the safest repositories, the security team concurrently implements robust controls for sensitive data. Working in parallel ensures that critical data assets are properly secured when the AI platform is ready to add them.

This strategy allows the initial rollout to demonstrate immediate value. Subsequent phases then generate even greater excitement as more critical data becomes safely available to business users. Organizations that adopt this parallel approach not only prove ROI much faster, but also leverage that early momentum to boost employee engagement and pivot quickly toward new, high-return AI use cases.

Is security a growing concern for your AI initiative? Learn how we can help build your Unified Entitlements model here.